Bee vs. drones: what’s the difference? Drones have to be artificially coded while Humans have all the instructions naturally coded in, our parents, friends, and teachers may have taught many things but our internal programming isn’t one of them. This includes our spatial sense created with the help of the eye.

It is amazing how a simple bee can avoid obstacles and navigate our three-dimensional world way better than modern hi-tech drones. Despite the fact that bees use only cameras (eyes) as inputs and a much less powerful processing unit (bee brain) while modern drones have powerful processors, multiple cameras, gyrosensors, altitude sensors, and what not.

MODERN DIGITAL CAMERAS PERCEIVE THE QUALITY OF COLORS AND IMAGE DETAILS BETTER THAN HUMAN EYE

It clearly indicates that the wisdom of perceiving our spatial environment is not hidden in the processing power and number of sensors, but the design, programming, and optimization of visual inputs. The second and third element count the most because we can pretty much design anything and quality of digital cameras have surpassed the HD standard of our eyes. Evolution of drones depends on it.

Bee vs Drones: Perception of near and far in indoor environment

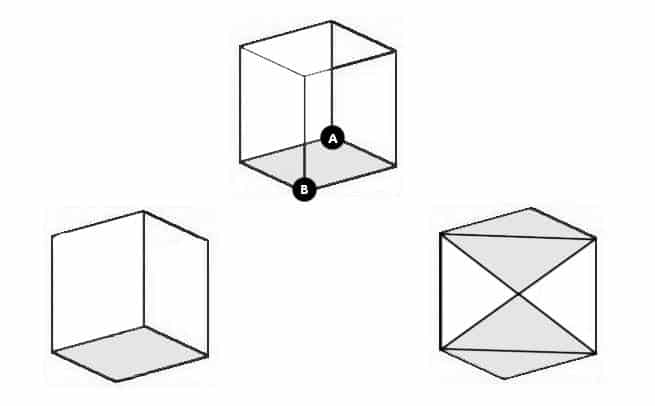

To explain this to you I have a very simple puzzle for your eyes and curiosity to feast on. The 3D cube below is nothing but square space, yet, when we see the entire picture as a whole, the 2D image forces us to look at it as 3D Cube because of the reference of edges, especially the role point A is performing in relation to the point B.

If you consider that point A is nearer than the point B then it appears that you are viewing the bottom side (colored) of the cube from the below. If you consider that point B is nearer than the point A then it appears that you are viewing the colored face of the cube from the from above. This simple relation between point A and B serve as a strong indicator of their spatial depth and the orientation of the cube on the whole.

The interesting thing here is that the image lies on a 2D plane. What’s more interesting is that the colored face of the cube shifts from top to bottom based on your choice that which point did you decide to put nearer. This unveils that when we are observing our surroundings, the environment in our brain is processed in such a way that edges, corners, and angles are processed as a priority to give us a spatial sense of our surroundings. The colors and other complex details are filled in afterward.

Biological brains are highly optimized to first take the edges into account and process everything else later. The same is the case for the bee, however, drones doen’t work that way. Instead, image processing software focuses on Regions of Interest (ROIs) to determine the picture. The gyro sensors help process drone’s upward orientation in space and sensors of altitude help maintain its position.

Bee vs. Drones: Spatial perception of outdoor environment

What we have discussed so far assumes indoor environment, where bees rock and AI drones crash. The principle of perceiving the outdoor environment is basically the same, but in this case, bees use the Sun as a reference point while drones use GPS system for guidance. As there are more objects and diversity in an outdoor environment, the complexity of spatial perception is also increased.

The natural world lacks edges and corners. The trees, mountains, flowers, water, and everything else in nature are shaped in patterns that mostly avoid the use of edges and cubes. As we are creating Machine Vision, we must also learn to take this complexity into account and make better drones that can navigate indoor and outdoor environment as bravely as the bees can.